Minimizing Regret

Hazan Lab @ Princeton University

Research

Sequence Prediction and Spectral Transformers

- Recent research from our lab connects learning in dynamical systems to sequence perdiction via a new architecture called Spectral Transformers, more information and recent publications in this link.

Control and Reinforcement Learning

-

A new framework for robust control called Non-Stochastic Control. This permits control in adversarial environments via a new type of algorithm, the Gradient Perturbation Controller, which also gives rise to the first logarithmic regret in online control.

In this framework we can also control with unknown systems and partially observed states. Recent additions include Non-Stochastic Control with Bandit Feedback , Black-Box Control for Linear Dynamical Systems , Adaptive Regret for Control of Time-Varying Dynamics

For a survey, see this book draft.

-

Combining time series and control algorithms via the new technique of Boosting for Dynamical Systems.

-

The Spectral Filtering, technique, and its application to asymmetric linear dynamical systems.

-

Maximum-entropy exploration in partially observed and/or approximated Markov Decision Processes.

-

Machine learning methods for control of medical ventilator, see press release.

Optimization for Machine Learning

Machine learning moves us from the custom-designed algorithm to generic models, such as neural networks, that are trained by optimization algorithms. For a survey see these lecture notes. Some of the most useful and efficient methods for training convex as well as non-convex methods that we have worked on include:

-

The AdaGrad algorithm, and the technique of adaptive preconditioning.

-

Sublinear optimization algorithms for linear classification, training support vector machines, semidefinite optimization and to other problems.

-

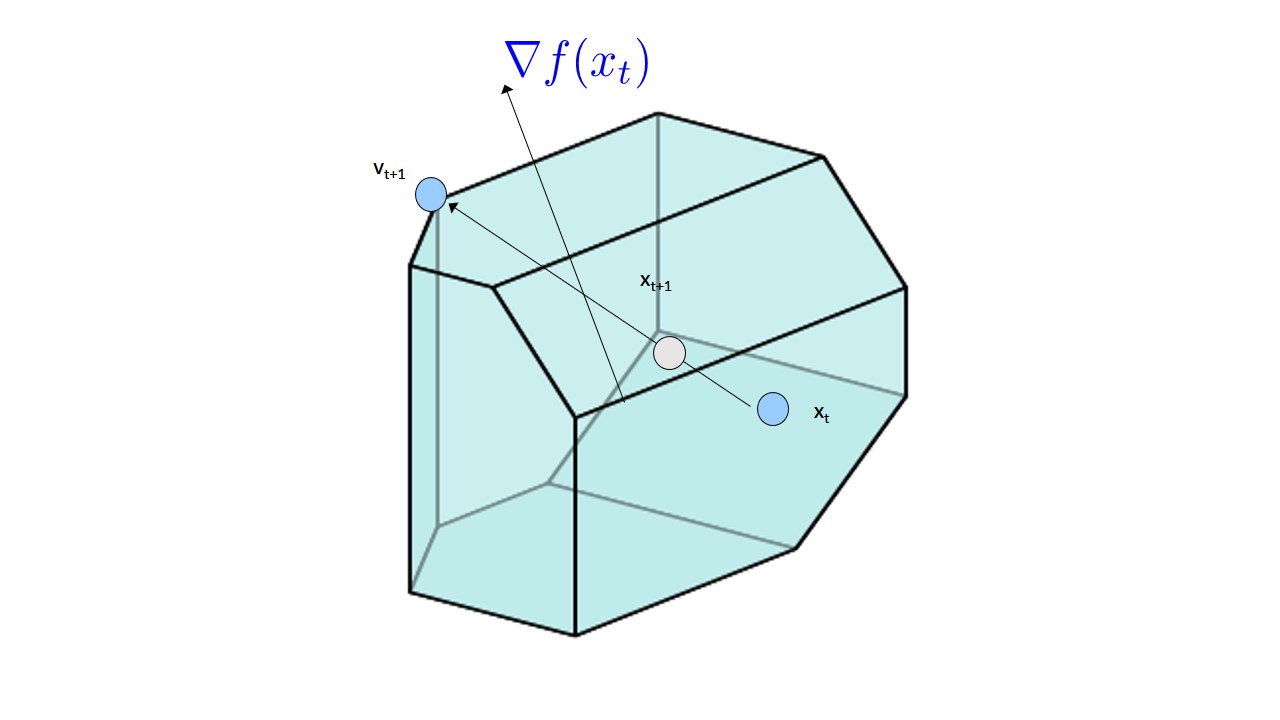

Projection-free algorithms for online learning in the context of recommender systems, and the first linearly convergent projection-free algorithm.

Online Convex Optimizatiom

In recent years, convex optimization and the notion of regret minimization in games, have been combined and applied to machine learning in a general framework called online convex optimization. For more information see graduate text book on online convex optimization in machine learning, or survey on the convex optimization approach to regret minimization. Our research spans efficient online algorithms as well as matrix prediction algorithms, and decision making under uncertainty and continuous multi-armed bandits.

Share on: